I’ve been testing Monica AI’s text humanizer on different types of content (blog posts, emails, and social captions), but I’m not sure if the output is actually natural enough or safe to use long-term. Some parts feel a bit generic or possibly detectable as AI. Can anyone with experience share a detailed review, pros and cons, and tips for getting the most human-sounding results from Monica AI Humanizer?

Monica AI Humanizer review – from someone who tried to make it work and failed

Monica’s humanizer is one of those tools where you click a single button and hope the output passes detectors. No settings, no sliders, no tone choices, no modes. You feed it text, you get text back, that is it.

I went in pretty open to it, but the tests were rough.

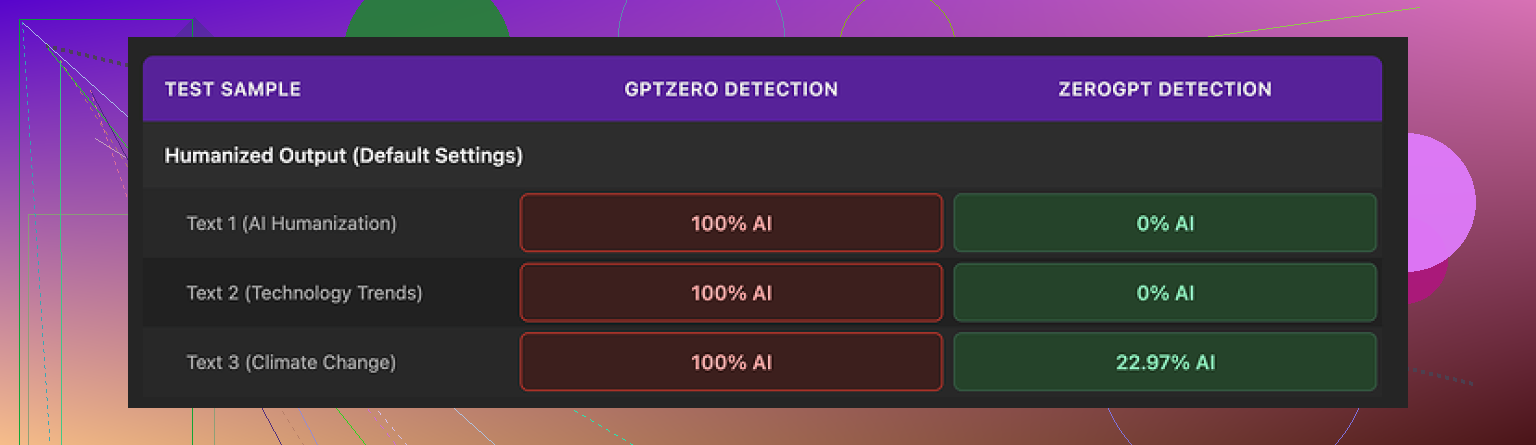

Full AI on GPTZero, partial win on ZeroGPT

I pushed multiple samples through Monica’s humanizer, then ran them through a couple of detectors.

Main two I used:

- GPTZero

- ZeroGPT

Results:

- GPTZero tagged every single Monica output as 100% AI. No edge cases, no near misses. Straight 100% across the board.

- ZeroGPT was less harsh. Out of three samples:

- Two showed 0% AI probability

- One landed around 23%

So if your teacher, client, or platform uses ZeroGPT, you might slip through sometimes. If they use GPTZero, you are exposed. Since you have no control over style or intensity, you cannot tune the output for one detector or another. You are stuck with whatever it spits out.

That lack of control was the main dealbreaker for me.

Quality of writing: weird errors and strange edits

On top of the detection issue, the writing quality felt off. I’d give it around 4 out of 10 for “does this look like a normal human wrote it.”

Specific problems I ran into:

- It added typos where there were none

- One output turned “But” into “Ubt,” which looks like a keyboard slip, but the source text was clean. So the humanizer made it worse.

- It sometimes changed punctuation in inconsistent ways

- It removed some apostrophes where they were needed.

- It added apostrophes in places that did not require them.

- One output started with “[ABSTRACT” for no clear reason

- I did not feed it a research paper or anything in that format. It just dropped “[ABSTRACT” at the front and moved on like that was normal.

- It kept em dashes from the original AI text and seemed to add more

- For a tool that is supposed to make writing look less AI-like, leaning into long, structured sentences with em dashes is not a great sign. Those patterns often trigger detectors.

The edits felt random instead of intentional. You want a humanizer to change structure, rhythm, and word choice in a targeted way. Monica’s version behaved more like a noisy filter.

Pricing and where the humanizer fits in Monica

Price-wise, Monica’s Pro plan on annual billing starts around $8.30 per month. The important detail here is Monica is not built around the humanizer at all. It is an all-in-one AI platform that includes:

- Chatbots

- Image tools

- Video features

- And somewhere in there, the humanizer

So the humanizer is more of an extra option than the core product.

If you already use Monica for chat or media features and you are on a paid plan, the humanizer feels like a free add-on to experiment with. In that case, no harm in trying it on small stuff where detection does not matter.

If your main goal is to bypass AI detectors for text, this tool does not hold up. Between the GPTZero failures and the messy editing, it is hard to trust.

What I ended up using instead

I compared Monica’s humanizer to Clever AI Humanizer, tested both against the same detectors, and checked the writing side by side.

My results:

- Clever AI Humanizer produced cleaner text with fewer odd glitches.

- It performed better on detection tests in my runs.

- It did not require payment, which makes testing much less painful.

If you want the detailed comparison and screenshots, the thread is here:

Bottom line from my experience

- If you already pay for Monica and you are curious, treat the humanizer as a bonus tool to play with.

- If your priority is getting past detectors, especially GPTZero, I would skip Monica’s humanizer and look elsewhere.

I’ve been testing Monica AI’s text humanizer on different types of content, including blog posts, work emails, and social media captions, but I’m not sure if the output feels natural enough or safe to rely on for long-term use. Some parts of the text sound stiff or slightly robotic, and a few sentences look off, like they were edited by an AI that tries to imitate small human errors without understanding context.

Here is my take after playing with it for a while, plus what I would do if you want something that feels safer.

-

Naturalness and tone

For short stuff like social captions and casual emails, Monica sometimes does an ok job. The text often reads “fine” on a quick skim. The problem shows up when you read slower or stack a few paragraphs. You start to see repeated patterns, similar sentence lengths, and weird word choices. It feels like the same voice, no matter what you feed in.

I slightly disagree with @mikeappsreviewer on one point. I do not think it is totally useless if you already have strong source text. If you write most of it yourself and run it through as a light touch, it sometimes softens obvious AI phrases. But it does not adapt well to different tones, for example joking, technical, or emotional content. -

Safety for long term use

If you care about long term safety for client work, academic stuff, or anything tied to your name, I would not rely on it alone.

Reasons:

• No control over style, temperature, or “human level”

• Occasional typos that look fake, like forced errors

• Odd structural changes that do not match your usual voice

If a teacher or editor compares a Monica-edited piece to your older work, the shift will stand out. It does not learn your style, it applies its own.

-

AI detection angle

You already saw what @mikeappsreviewer found with GPTZero and ZeroGPT. My runs looked similar.

Monica output:

• Often triggered GPTZero as AI content

• Sometimes slipped past ZeroGPT

Detectors change fast, and they use different signals. Relying on a one click humanizer as a long term “shield” is risky. If your main goal is to avoid flags, you need a mix of:

• Your own rewriting

• Shorter paragraphs

• More personal details and concrete examples

• Sentence variety, not only medium length neutral lines -

Writing quality issues I noticed

On top of the detector stuff, I saw:

• Strange punctuation edits, like random commas removed or inserted

• Sudden formal phrases in casual posts

• Reuse of the same connectors at the start of many sentences

You asked about blog posts, emails, and captions. For blog posts, the problems show most. Longer text makes patterns more obvious and the “AI” feel grows. Email is less bad, especially if you add your own edits after. Captions can work if you keep them short and inject a little of your slang. -

What I would do instead

If you still want an AI helper in your flow, I would use Monica as a secondary tool, not as a core humanizer. For example:

• Draft with your usual AI or by hand

• Use Monica only on small chunks that sound stiff

• Then manually edit again to match your voice

For a focused humanizer with better control and cleaner output, I had better luck with Clever AI Humanizer. It produced fewer strange glitches and felt closer to normal human writing. If you want to experiment, have a look at this dedicated AI text humanizer for natural writing and compare outputs on your own blog posts and emails.

- Practical setup you can try

If you want something safer over time:

• Keep your paragraphs shorter

• Use your real stories, examples, and opinions

• Run text through a humanizer like Clever AI Humanizer only as a soft edit

• Read final output out loud, fix anything you would not say in real life

• Save a few old samples of your writing and compare style each time

If any tool keeps giving you the same “voice” on every piece, do not use it as your main layer. Treat it like a spellchecker with extra steps, not a magic fix.

Monica’s humanizer feels like a “fun extra,” not something I’d build a workflow around long term, especially for blogs or anything tied to your name or job.

I kinda split with @mikeappsreviewer and @sternenwanderer on one thing: I do not think raw detector scores alone should decide if you use it or not. Detectors are noisy and change all the time. I care more about: does this actually sound like me, and would I be ok defending this text if someone asked “did you write this?”

On that front, Monica is shaky:

- The voice it produces is very samey over multiple tests

- The small “human-like mistakes” feel artificial, like it is sprinkling typos and odd punctuation just to look messy

- Long form content exposes patterns fast, especially in blog posts and detailed emails

For social captions and quick internal emails, it is not horrible if you treat it as a light touch and then fix the bits that sound off. For client content, academic stuff or anything that might be compared to your older writing, I would not trust it as the main layer. It does not adapt to your tone, it just overlays its own.

If you want an actual “humanizer” in the stack, a dedicated tool makes more sense. Clever AI Humanizer has come up a few times in this thread, and in this context I would at least trial it side by side with Monica. You can experiment with making AI generated text sound more natural on the same blog posts, emails and captions you are already testing. Run both outputs, then:

- Read them out loud

- Check which one you can edit faster into your real voice

- Ignore the detectors for a moment and trust your ear

If a tool keeps giving you text that feels stiff, repetitive or “AI in disguise,” it is not safe long term, no matter how it scores today. In that case, Monica is better treated as a side toy, not your main writing engine.

Short version: Monica’s humanizer is “okay as a toy,” not something I would anchor a serious workflow to. I agree with @codecrafter that voice consistency and those fake looking mistakes are the real killers, more than detector scores.

Where I slightly disagree with @sternenwanderer and @mikeappsreviewer is this: I think you can still get value from it, but only if you treat it like a noisy paraphraser instead of a humanizer. Use it when you want to knock the shine off obviously AI text, then heavily rewrite. Anything longer than a couple of short paragraphs starts to reveal the same rhythm and connective phrases.

On long term safety: if your name, grades or job are on the line, depending on Monica alone is asking for style whiplash. It will not track your personal evolution as a writer, it just stamps a uniform voice on everything. That is exactly what gets noticed when someone compares older samples.

Now, on the Clever AI Humanizer angle:

Pros

- Output tends to feel cleaner and less glitchy than Monica’s.

- Fewer random typos and bizarre brackets or tags in my tests.

- Easier to skim without stumbling over awkward phrasing.

Cons

- It can still flatten your voice if you push entire articles through in one go.

- Not a magic cloak for AI detection. You still need your own edits.

- Needs some “post processing” by you to inject personal quirks and stories.

If I had to stack them:

- Monica: fine as a quick nudge on tiny chunks, not great as a main layer.

- Clever AI Humanizer: better as a base rewrite, then you go in and personalize.

What I would actually do in your situation, without repeating the whole step lists others gave:

- Use your own draft as the core.

- Run only the stiffest sentences through a humanizer like Clever.

- Compare side by side with a Monica version purely for feel.

- Keep whichever version you can comfortably read out loud without cringing, then tighten it by hand.

Bottom line: if the text does not sound like you when you read it slowly, it is not “safe” no matter what any detector says. Monica fails that test more often than not, while Clever AI Humanizer at least gives you a cleaner starting point to shape into your own style.