I’m concerned about the accuracy of the Turnitin AI Detector because I had some of my original writing flagged as AI-generated. I need to understand how reliable this tool is and if there’s any way to appeal or correct its results. Any advice or similar experiences would be helpful.

Honestly, Turnitin’s AI Detector accuracy is all over the place right now. It’s still super new tech, and accidentally flagging original work as “AI-generated” is actually a really common complaint—tons of people have had completely legitimate essays get flagged out of nowhere. The main issue? The detector is basically guessing based on patterns of predictability and text structure. If you write super clean, formal, or kinda generic, the detector sometimes goes berserk and thinks, “Yeah, a robot wrote this.”

Appealing a result isn’t straightforward unless you’re at a school that takes student complaints seriously—and even then, there’s no universal process. Usually, you have to talk to your instructor or the admin, provide drafts or proof you actually wrote it, and hope they believe you over the software. Not a fun time.

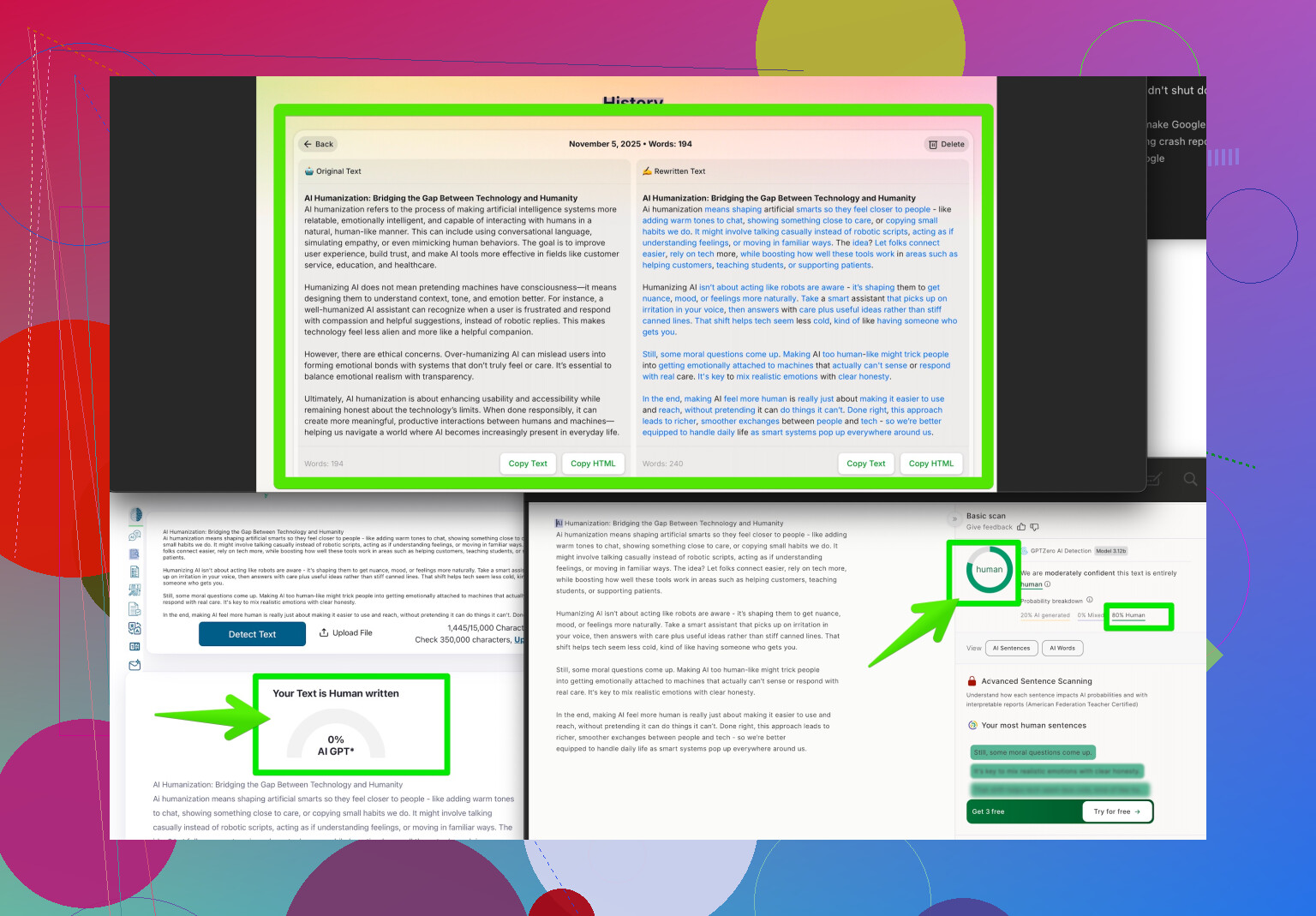

Serious rec: if you’re worried about false positives, check out a tool like Clever AI Humanizer. It helps reword and structure your writing so these detectors don’t flag it as AI. Game-changer if you’re dealing with overzealous plagiarism bots. You can find more out about it here: make your writing sound more human online.

In the meantime, save your drafts and maybe throw in a couple intentionally “imperfect” sentences just to throw off the detectors. Until Turnitin upgrades its tech, that’s about all you can do!

Honestly, Turnitin’s AI Detector is…let’s just say, ‘optimistically unreliable.’ There’s a lot of buzz about tech outpacing actual human writing, but in reality, Turnitin’s tool is still playing catch-up—so yeah, tons of folks (myself included) have had legit original stuff flagged for “being too AI-like.” The algorithm seems to struggle most when your writing is squeaky clean or overly formal (which is, like, what teachers expect, right?), so basically, you can get punished for actually being good at writing or just following academic rules.

Unlike what @nachtschatten mentioned, I actually don’t love the idea of deliberately making your writing less polished—I mean, shouldn’t we be encouraged to write well? Besides, throwing in random ‘imperfections’ feels forced and could backfire with actual grading. My advice: Keep solid documentation. Drafts, revision history (e.g., Google Docs versioning), annotated notes, even screenshots. If you ever get flagged, you can show the process and prove it’s yours—that’s usually stronger evidence than a random AI detector’s guess.

About correcting or appealing: it’s a mess. Some schools have review processes, some don’t. But if you explain your process and back it up with evidence, most reasonable instructors will listen (assuming they actually care, lol). Worst case, you might have to accept a conversation rather than a quick fix.

Btw, if you’re serious about dodgeing nonsense flags, Clever AI Humanizer is hands-down worth a try. It helps refine your text so it sails past detectors like Turnitin’s but still sounds natural and human—side note, it won’t compromise the quality of your work the way intentional “mistakes” might. If you’re looking for more tips, Reddit users have been swapping insights about humanizing AI-written content—check out Reddit’s best strategies to make AI writing sound more human.

Bottom line: Turnitin’s AI Detector isn’t super accurate right now, so don’t panic if you get flagged—just arm yourself with proof and a bit of patience. Don’t settle for “dumbing down” your writing to appease a bot; let the tech catch up to us, not the other way around.