I recently went through a HIX bypass review and the outcome wasn’t what I expected. I’m confused about how the decision was made, what criteria were used, and whether there’s any way to appeal or submit additional information. Can someone explain how HIX bypass reviews typically work, what documentation is most important, and what steps I should take next to challenge or clarify the decision?

HIX Bypass AI Humanizer review, from someone who burned time and money on it

HIX Bypass throws a lot at you on first load. Big success claim, “99.5% success rate”, fancy logos from Harvard, Columbia, Shopify, all that. Looks solid at a glance. I went in expecting at least something usable.

I got something else.

Testing setup

I used two different text samples. Both started as obvious AI output. Then I ran each one through HIX Bypass and checked them against multiple detectors.

Detectors I used:

- ZeroGPT

- GPTZero

- The built in “multi-detector” widget on the HIX Bypass site

I tried to keep it simple. Paste in raw AI text. Humanize with HIX Bypass. Paste the result into each detector. No tweaking.

Results

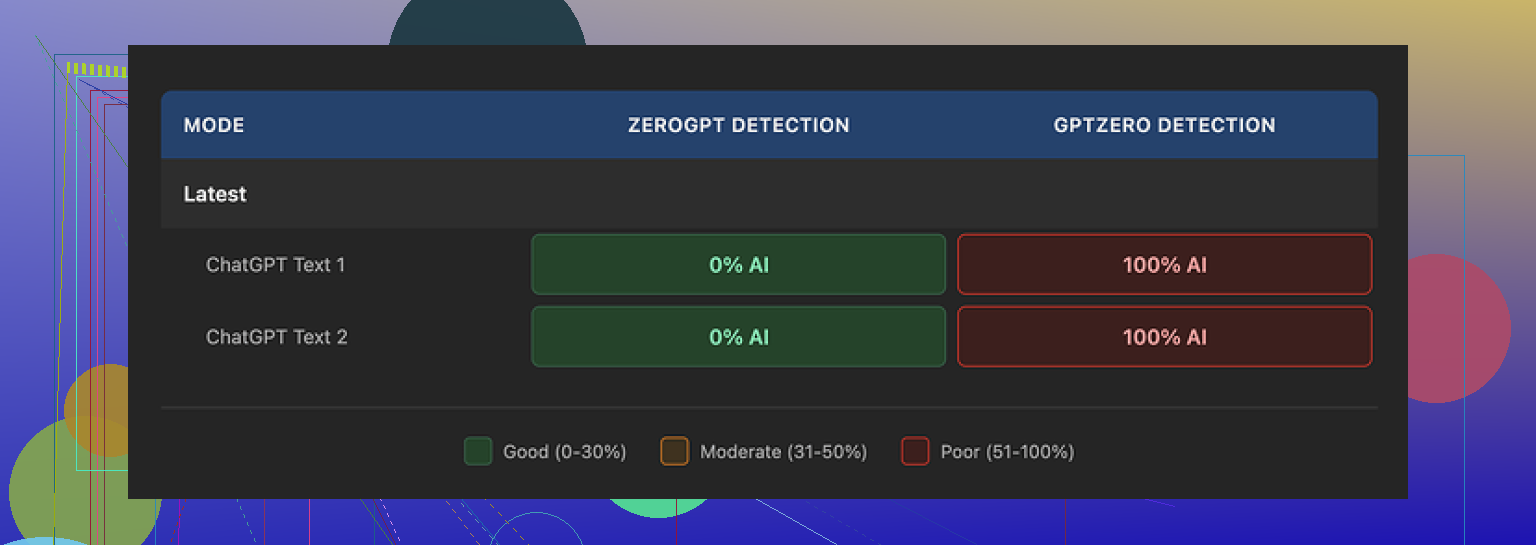

ZeroGPT

Both samples were clear. No issues. It labeled them as human text.

GPTZero

Total opposite. GPTZero marked both samples as 100 percent AI generated. No gray area.

HIX Bypass internal checker

The integrated tool showed stuff like “Human-written” across most of the detectors it aggregates. That view looked clean and safe. Problem was, once I manually pasted the same text into GPTZero myself, the result did not match what their tool had implied.

So the “multi-detector” screen felt misleading. It gave a sense of safety that did not hold up once I verified outside their widget.

Here is the kind of thing I saw:

Writing quality

Ignoring detectors for a second, I tried to look at the writing itself as if I had to submit it to a client or professor.

I would rate it 4 out of 10.

Problems I hit:

- It kept em dashes in the text, even though a lot of detectors treat that style as suspicious for AI. That defeats the purpose of a “humanizer”.

- One of the outputs contained a broken sentence fragment in the middle of a paragraph. Not a typo, more like the generator cut off a thought and never finished it.

- Another output wrapped an entire sentence in square brackets for no clear reason. Not like a citation or an editor note, just full sentence in brackets. That would trip any human reader.

The tone also felt off. Slightly stiff, slightly generic, like someone trying to sound formal without understanding the topic. For a tool sold as a “bypass” layer, I expected something with better phrasing.

Limits and refunds

The free version is barely usable for testing.

- Free tier limit: 125 words per account. That is not 125 words per run, it is per account. I blew through that quicker than I expected.

- Refund rule: 3 day window, but only if you stay under 1,500 words processed.

So if you want to test it properly across a few detectors with multiple samples, you hit a weird tradeoff. Either you:

- Test enough text to know it does not work for your use case, and risk crossing the 1,500 word line.

Or - Stay under that cap and never get a good feel for performance.

I did not like that structure. It punishes anyone who wants to run a serious trial. A lot of people will go slightly above the word limit without noticing, then find out they are not eligible for a refund.

Pricing and terms

On the pricing page, the Unlimited annual plan shows up as about $12 per year. On paper that looks cheap. Once you read their terms of service, the deal feels less stable.

Two things stood out:

- They give themselves room in the terms to adjust usage limits after you already paid. So “Unlimited” is more of a label than a guarantee.

- They grant themselves broad rights over whatever you paste into the tool.

There is also a note for free tier users. If you are on the free plan, your inputs can be used to train their models. So if you paste client work, unpublished drafts, or anything sensitive, you lose control fast.

This all matters if you work with NDAs, student work, or anything personal.

Overall take

Putting everything together:

- Detectors: Looks fine on ZeroGPT, fails hard on GPTZero. Their own integrated checker does not match a direct GPTZero test.

- Writing: Feels off, leaves obvious AI tells, and sometimes corrupts sentences.

- Limits: Free tier is too small for a real test. Refund rule is tight and tied to word count.

- Legal side: Terms let them change limits and reuse content. Free plan uses your text as training data.

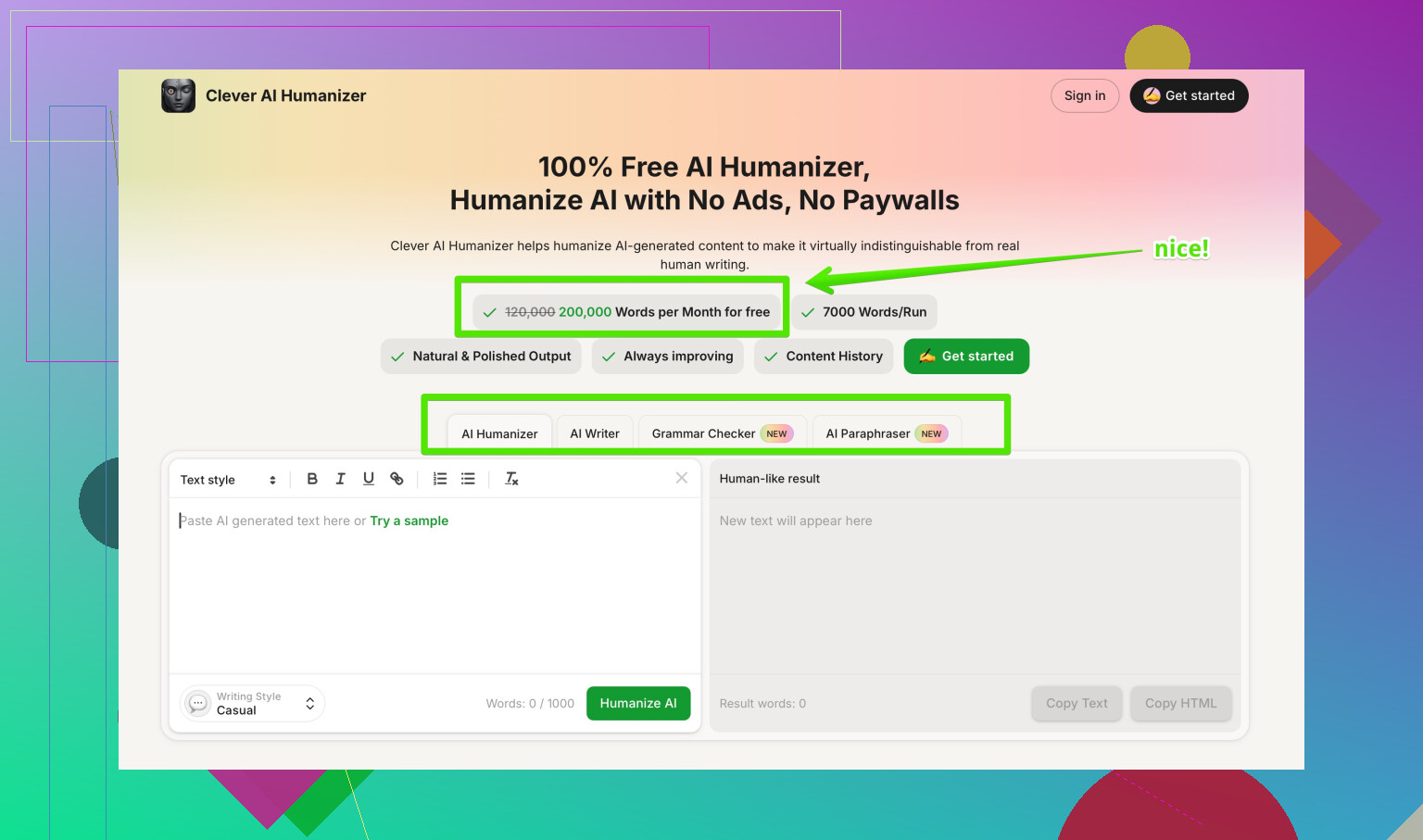

After this, I tried a different tool, Clever AI Humanizer, on the same general problem. I got more natural rewrites and better detector results, and I did not pay anything to test it. If you are curious, the writeup on that is here:

If you only care about ZeroGPT screenshots and do not worry about GPTZero or content rights, HIX Bypass might pass some quick checks. For anything serious, it did not meet the bar for me.

I went through something similar with a HIX bypass review, and yeah, the outcome felt random at first. Here is how I’d break it down so you know what likely happened and what to do next.

- How the decision was probably made

Most “HIX bypass” style checks rely on three things:

• AI detection scores from tools like GPTZero, ZeroGPT, and internal models

• Text features such as sentence length, repetition, punctuation style, and structure

• Policy checks like plagiarism risk and suspicious formatting

If your text still had:

• Repetitive phrasing

• Overly neat structure with similar sentence lengths

• Odd punctuation or brackets or em dashes

a detector often flags it as AI, even if a human edited it.

Different detectors weigh signals differently. @mikeappsreviewer already showed that ZeroGPT cleared HIX text while GPTZero slammed it. Review teams often trust their preferred detector and ignore others.

- Why the outcome felt off

Common reasons your bypass review did not go your way:

• HIX output stayed too close to the original AI text. Small rewrites tend to fail strong detectors.

• The tool focused on passing its own “multi detector” widget, which does not always match independent checks.

• The reviewer or automatic system likely used a single stricter detector as the final authority.

I disagree a bit with the idea that HIX is useless across the board. For low risk content or platforms that rely on weaker detectors, it sometimes does enough. For strict reviews like academic checks or paid platforms, it often falls short.

- Criteria they likely used

You will not always get a breakdown, but in practice they usually look at:

• AI probability score above a threshold, often 70 to 90 percent

• Burstiness and perplexity scores that look too “smooth”

• Plagiarism or near duplicate chunks if the text matches common AI outputs

• Topic sensitivity, stricter rules for school work, exams, legal, medical, or financial content

If one strong detector said “highly likely AI,” many reviewers stop there.

- What you can do next

Here is a practical path instead of guessing.

Step 1. Ask for clarification

Send a short message like:

“Can you tell me which AI detection tool or criteria were used in my HIX bypass review and what score or threshold it failed on. I want to avoid the same issue next time.”

Do not argue in that first message. You want data, not a debate.

Step 2. Request an appeal or recheck

If they allow appeals, keep it focused:

• Acknowledge they flagged it.

• Explain what changes you made yourself, if any.

• Offer to provide drafts, timestamps, or revision history that show your edits.

Example:

“I revised the text myself before and after using tooling. I am happy to share earlier drafts or version history so you can see my manual edits. If possible, please review with a second method or human review.”

Step 3. Submit additional info

If they accept more info, send:

• Original draft you wrote by hand or in a basic editor

• Intermediate versions with visible changes

• A brief explanation of your process, for example: “Initial outline was mine, then I used a tool to restructure, then I rewrote sections line by line.”

Do not send a long story. Reviewers skim. Make it easy.

- How to avoid the same issue next time

Automation alone will not save you on strict checks. Some steps that work better in my experience:

• Start with your own outline in bullet points

• Use any AI tool only to brainstorm or restructure, not to produce final paragraphs

• Rewrite each sentence in your own voice

• Vary sentence length and structure

• Remove weird punctuation, brackets, long chains of commas, and double spaces

• Run your final text through at least two detectors yourself

Tools like HIX that promise “99.5 percent bypass” usually target softer detectors or marketing use. For tighter reviews, they disappoint.

If you still want a helper tool, I had more luck with Clever AI Humanizer than with HIX on stricter tests. It tends to produce more natural phrasing and keeps fewer “AI tells.” You still need to edit, but it reduces the heavy lifting. You can check it at

make your AI text sound more human

then run your own edits and detection checks on top.

- SEO friendly version of your topic

HIX Bypass Review: Confused by your AI detection result and failed bypass check

If you went through a HIX bypass review and did not get the result you expected, you might wonder how the decision was made, which AI detection tools were used, what criteria flagged your content, and whether there is a way to appeal the outcome or submit more information for a second review.

Yeah, that “what just happened?” feeling with a HIX bypass review is pretty common.

From what @mikeappsreviewer and @yozora posted, your experience lines up with a bigger pattern: HIX looks shiny, claims huge success, then the actual review result feels random and opaque.

Here is what is probably going on, without rehashing all their steps.

- How the decision was likely made

Under the hood, the review process is usually a combo of:

- One primary AI detector they actually trust (often something strict like GPTZero or a proprietary variant)

- A simple threshold like “if score > X percent AI, auto fail”

- Maybe a quick manual skim if the content is high impact or flagged for other reasons

That flashy “multi detector” panel on HIX is mostly marketing. It aggregates a bunch of tools but the actual reviewer or automated pipeline almost certainly did not weigh them all equally. They pick one as the “source of truth” and treat the rest as noise.

The confusing part is that HIX tries to make you feel safe by showing green lights across several detectors, while the one detector that actually matters for your review may still scream “AI.”

- Criteria they are probably using

Instead of the classic “perplexity” buzzwords, what usually matters is more basic:

- Overall AI probability score above a fixed threshold

- Too consistent rhythm in sentence length

- Reused phrases that sound templated

- Slightly formal but bland tone

- Formatting quirks (odd brackets, too clean bullets, uniform punctuation)

I slightly disagree with the idea that HIX just fails because detectors are harsh. A big part of the problem is that HIX output often keeps the same logical structure and flow as the original AI draft. It rearranges and rephrases, but the “skeleton” stays machine-like. Strict detectors pick up on that pattern, even when the wording looks more human.

- Why it felt inconsistent with what you saw

You probably saw:

- HIX’s widget saying “human written” on multiple tools

- Then the actual review result saying “AI detected” or “bypass failed”

That inconsistency is not an accident. HIX is optimized to look like it bypasses detection. The review system you hit is optimized to not be fooled by surface level rewrites. Those are two very different goals.

So yeah, from your perspective it feels like: “All green here, sudden fail there.”

From their perspective it is: “Marketing tool says yes, our real detector says no, we trust the real one.”

- What you can still do about this review

Without repeating the same appeal template others suggested, here is a slightly different angle:

- Ask one very specific question:

“Was my text evaluated by a single AI detector as the deciding factor, and if so, which one and what was the approximate score range?”

You are not asking for internal policy, just tool + range. That is more likely to get answered than “explain your whole process.”

- If they allow another shot, do a heavier rewrite next time:

- Keep only your ideas and structure

- Completely rephrase each paragraph from scratch

- Change paragraph order where possible

- Cut anything that sounds like “template advice”

Using any bypass tool as the final pass is what burns people. Better workflow is:

you write → optional AI help for ideas → you rewrite manually → optional humanizer like Clever AI Humanizer for polishing → you manually fix tone and quirks.

- About HIX specifically

I am a bit less forgiving than @yozora here. HIX is fine if:

- You only care about soft marketing checks

- The worst case is someone thinking “this sounds slightly robotic”

For anything involving grades, compliance, or accounts getting banned, it is a gamble. The tight free tier and sketchy refund conditions that @mikeappsreviewer highlighted make it more annoying to test properly too.

If you still want tooling in the mix, Clever AI Humanizer is less about “magic bypass” and more about making your text actually read like a human wrote it, which tends to incidentally work better with tougher detectors. You still need to edit, but it does a better job of breaking that AI “sameness.”

- Answering your last bit: can you appeal or add info

Short version:

- Yes, usually you can ask for clarification and sometimes a second review.

- Your best leverage is showing process: drafts, version history, and where you took over manually.

- Do not send a wall of text defending tools. Focus on “here is how I worked, here are my drafts, please review with human judgment or a second method if possible.”

If they flat out say “no appeals” or give you a canned response with no tool names or thresholds, that is basically code for “this decision is automated and final.” At that point, put your energy into changing process, not arguing.

- Better wording for your topic line

You mentioned “Best AI Humanizer Review on Reddit.” For something clearer and more SEO friendly you could go with:

Honest breakdown of top AI humanizer tools discussed on Reddit

That kind of phrasing signals what people actually get when they click and matches what folks searching for AI humanizer reviews are looking for.