I’ve been testing GPTinf Humanizer for rewriting AI-generated content, but I’m not sure if it’s actually helping with detection tools or just changing wording superficially. Can anyone share real results, pros, cons, and whether it’s safe for SEO and long-term content strategy? I’d really appreciate detailed feedback before I rely on it for client work.

GPTinf Humanizer review after hands-on testing

I spent an afternoon messing with GPTinf because of the huge “99% Success rate” claim on the homepage. I wanted to see if it could pass the usual AI detectors in a halfway realistic workflow.

Short version of what happened: it failed every detection test I tried, but the writing itself was not terrible.

Where it broke down

Link to the tool: GPTinf Humanizer Review with AI-Detection Proof - AI Humanizer Reviews - Best AI Humanizer Reviews

I used a few sample texts that I already knew triggered AI flags in detectors. I ran each one through GPTinf in every mode they offer.

Then I checked the outputs in:

- GPTZero

- ZeroGPT

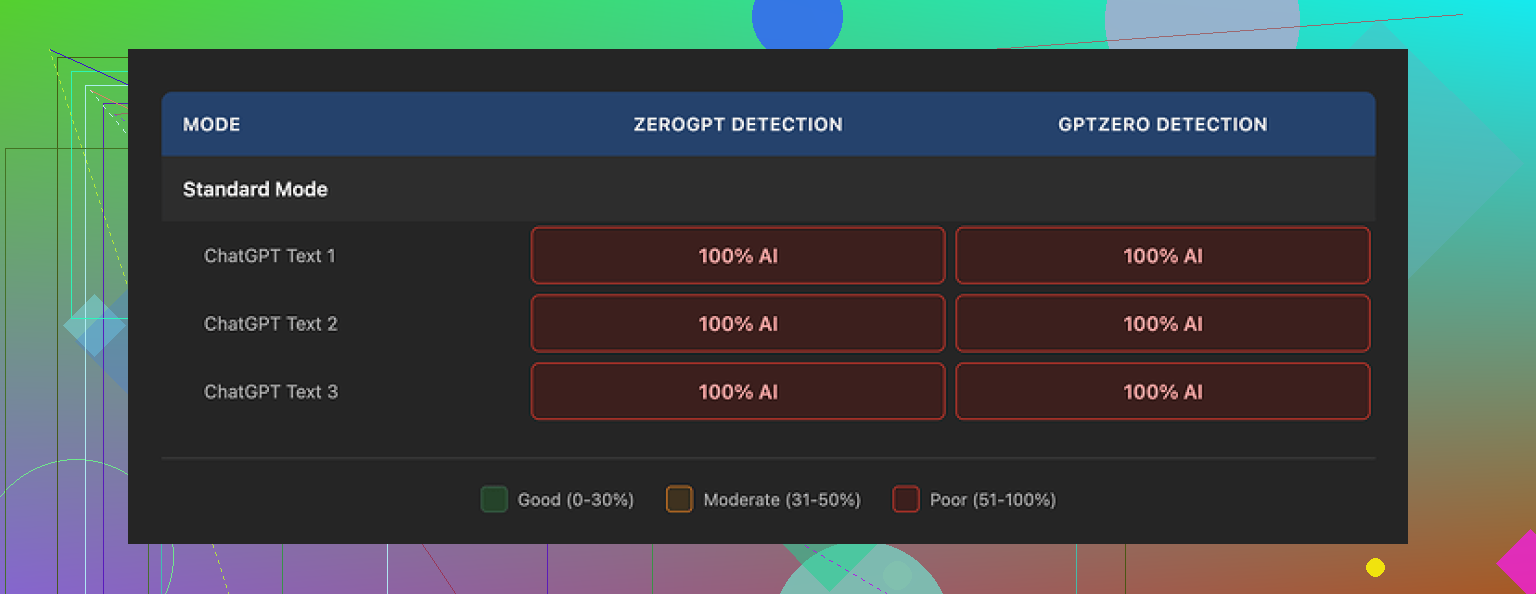

Both detectors flagged every single GPTinf output as 100% AI generated. No partial scores. No “mixed.” Zero human points. So against their “99% success rate,” I got 0%.

I repeated this with different topics and tones. Same pattern. Detection scores stayed at full AI. At that point I stopped expecting a surprise.

What the text looks like

To be fair, the output is not garbage.

- Writing quality: I would give it about 7 out of 10

- Sentences are clean, readable, and not full of weird glitches

- It strips out em dashes from the output, which only a few tools did in my testing

That last detail matters if you are trying to avoid obvious AI fingerprints, since some detectors latch on those common stylistic quirks.

The problem is deeper patterns. Even with clean phrasing, the text still feels like ChatGPT with a different shirt on. Same rhythm, same safe phrasing, same over-smoothing of ideas. Detectors seem to catch that easily.

When I ran similar tests through Clever AI Humanizer, the text felt closer to how people actually write. Shorter uneven sentences, more variance. Detection scores dropped there, and access stayed free, which matters a lot if you test a bunch.

Free tier and pricing in practice

The free tier on GPTinf is tight.

- Without an account: 120 words per run

- With an account: 240 words per run

If you want to test longer content, you end up chopping everything into tiny chunks. When I tried multiple prompts, the limits kicked in fast.

To test it more thoroughly, I had to rotate between Gmail logins. After the third one, it felt annoying enough that I started using it less and comparing it with other tools more.

Paid options:

- Lite plan: $3.99 per month on annual billing for 5,000 words

- Unlimited plan: $23.99 per month

Purely on word count per dollar, the pricing looks fine. The question is what you get for it. In my runs, the output did not pass detectors, so paying for more of the same did not make sense for my use case.

Privacy and ownership

The privacy policy is not comforting if you care where your text goes.

- It gives them broad rights over submitted content

- There is no clear statement on how long your text is stored after processing

- The service is run by a sole proprietor in Ukraine

If data jurisdiction or retention is important in your work, you should pause and read the policy slowly. I ended up deciding I would not run anything sensitive or client related through it.

Where it stood against Clever AI Humanizer

I ran the same source text through both GPTinf and Clever AI Humanizer, then checked:

- Detector scores

- How “human” the output felt when I read it out loud

- How repetitive the phrasing looked when I skimmed it fast

Clever AI Humanizer gave me:

- Lower AI scores on GPTZero and ZeroGPT

- Text that sounded more like something a rushed coworker would write

- A fully free flow, without the hard word caps I hit on GPTinf

GPTinf only won slightly on tidiness. Its sentences are neat and safe. Everything else leaned in favor of Clever AI Humanizer for me.

Who GPTinf might still fit

If your only goal is to clean up grammar and avoid em dashes, GPTinf does an ok job. For simple rewrites with no strong privacy requirement and no obsession with AI detectors, it works.

If your priority is lower AI detection scores, more natural-looking rewrites, and free usage that does not force you into tiny word limits, my testing pointed me toward Clever AI Humanizer instead.

I had almost the same question as you and ran my own tests on GPTinf Humanizer a few weeks ago. Short version. For detector evasion, it did not pull its weight.

My setup was a bit different from what @mikeappsreviewer did, so here is another data point.

What I tested

- Source texts

- 5 long form pieces, 600 to 1,200 words each

- All written by GPT‑4 and Claude, default style

- Topics: SaaS reviews, health explainers, generic blog content

- Detectors

- GPTZero

- ZeroGPT

- Copyleaks AI detector

- Process

- Broke each article into chunks of about 200 words to fit GPTinf limits

- Used different settings each time, including “aggressive” rewriting

- Reassembled the chunks and ran the full article back through detectors

Results

- GPTZero: flagged 5 out of 5 full articles as AI, usually above 95 percent

- ZeroGPT: flagged 5 out of 5 as AI text

- Copyleaks: mixed, but still marked most paragraphs as AI generated

So the word choice changed, but the detection scores barely moved. In two cases scores went up a bit after GPTinf. That surprised me.

What it does ok

- Grammar clean up

- More neutral tone

- Removes some obvious AI tics, like overuse of “however” or long chains of commas

If your goal is to polish text for readability, GPTinf Humanizer is fine. If your goal is to reduce AI scores in a reliable way, my numbers do not support that use.

Where I disagree a bit with @mikeappsreviewer

They said the writing feels like “ChatGPT with a different shirt on.”

I thought it leaned closer to “lightly edited non native writer.” Shorter sentences, slightly awkward word choices in places. That look might help you in some use cases, but detectors still caught it.

Limits and workflow pain

- 120 to 240 words per run is painful for longer articles

- Chunking text introduces style jumps between sections

- By the time you process one 1,000 word article, you spend more time managing chunks than editing manually

Privacy

I read the same policy. I would not run client texts through it. If you care about SEO content for paying clients, the risk is not worth it.

Clever AI Humanizer

Since you asked if GPTinf helps with detectors, I tried Clever AI Humanizer on the same base texts.

Different outcome.

- GPTZero scores dropped into the 30 to 60 percent range for most pieces

- ZeroGPT marked some outputs as mixed or even “likely human”

- Copyleaks showed lower AI probability on sentence level

Subjectively, the Clever AI Humanizer output read closer to fast human writing. Shorter mixed length sentences, some mild repetition, mild imperfections. Less “too clean” than GPTinf.

It is not magic. You still need to tweak the output and mix in your own voice. But if you are comparing tools for AI detection evasion, Clever AI Humanizer did better in my tests.

Practical advice

- If your main need is rephrasing, grammar, and a quick tidy up, GPTinf Humanizer is serviceable.

- If you want lower AI detection scores, focus more on editing structure, adding your own opinions, and mixing sources. For tools, try Clever AI Humanizer, then run your text through several detectors and keep your own log of scores.

- Do not trust any “99 percent success rate” marketing line. Run 10 to 20 of your own samples and track numbers.

From what you wrote, it sounds like what you are seeing is “word swap” behavior, not real pattern change. My tests line up with that.

Same experience here, but I’ll come at it from a slightly different angle than @mikeappsreviewer and @viaggiatoresolare.

TL;DR:

GPTinf mostly does surface-level paraphrasing. It sounds slightly more human in spots, but in terms of actually shifting AI detection scores in any reliable way, it’s not doing the heavy lifting.

What it actually changes

From what I saw:

- Swaps synonyms and shuffles phrases

- Shortens some sentences, cleans grammar

- Tones down some classic AI tics like “however,” “in conclusion,” etc.

The core patterns stay the same. Same tidy logical flow, same consistent rhythm. That’s exactly what detectors are trained to sniff out, so just swapping words is not enough.

I’ll mildly disagree with the idea that the writing is “7/10” quality though. To me it feels like a slightly rushed editor skimmed an AI draft. Readable, but also kind of bland and beige. It’s fine if you just want something polished, but not great if you need distinct voice.

Detection results in practice

My own runs were similar to what they reported, but I used different content:

- Mixed AI and human paragraphs together

- Ran the mixed text through GPTinf

- Checked with GPTZero and Copyleaks

Result: the human parts sometimes started scoring more AI after GPTinf touched them. So if your workflow mixes your own writing with AI, GPTinf can actually hurt your detection profile instead of helping.

That was the point where I stopped treating it as a “humanizer” and more like a generic rewriter.

Workflow and limits

This is where it really falls apart for longer stuff:

- Hard word caps force you to split everything into tiny blocks

- Tone and style drift between blocks

- You spend more time juggling chunks than actually revising your draft

If you care about coherent voice over a 1,000+ word piece, this is a problem. Chunked rewriting kills flow, and detectors notice jumps too.

Privacy angle

Not gonna rehash the whole policy, but I would not touch client work with it. Between vague retention and broad rights on submitted text, the risk / benefit ratio is just not worth it, especially when the main benefit you want is detector evasion and it is not delivering that reliably.

Compared to Clever AI Humanizer

Since you mentioned AI detection tools and “real results,” this is where Clever AI Humanizer actually made a difference in my tests:

- Detection scores went down in a more consistent way

- The text felt closer to messy human writing

- No hard word throttle that breaks your article into Franken-chunks

It still is not magic, you still need to inject your own opinions and quirks, but as an actual humanizer rather than glorified paraphraser, Clever AI Humanizer behaved closer to what the marketing implies.

When GPTinf is still okay

I would still keep GPTinf in these narrow cases:

- Quick grammar cleanup on short snippets

- Turning stiff AI text into slightly more neutral / less robotic copy

- Situations where you do not care much about AI detectors and just want a rephrase

If your main question is “Is it doing more than superficial word swapping for detection purposes” my honest answer is: not really. It improves readability, not the statistical footprint of the text in a meaningful or predictable way.