I used GPTHuman AI to generate and edit some important content, but now I’m not sure if it sounds natural, accurate, or trustworthy enough to publish. I’d really appreciate a detailed review, feedback on any issues, and suggestions on how to improve it so it reads more like a real human wrote it and less like obvious AI output.

GPTHuman AI review, from someone who spent too long testing this thing

GPTHuman markets itself as “the only AI humanizer that bypasses all premium AI detectors.” I went in curious, not optimistic, and, yeah, it did not match that line at all.

How I tested it

I used three different pieces of AI text that I normally use for benchmarking. They are around 300 to 800 words each, mixed structure, not fluff. I ran each one through GPTHuman, then checked the outputs on:

- GPTZero

- ZeroGPT

I did this three times per sample to see if the tool behaved differently across runs or if it mirrored text patterns.

Detection results

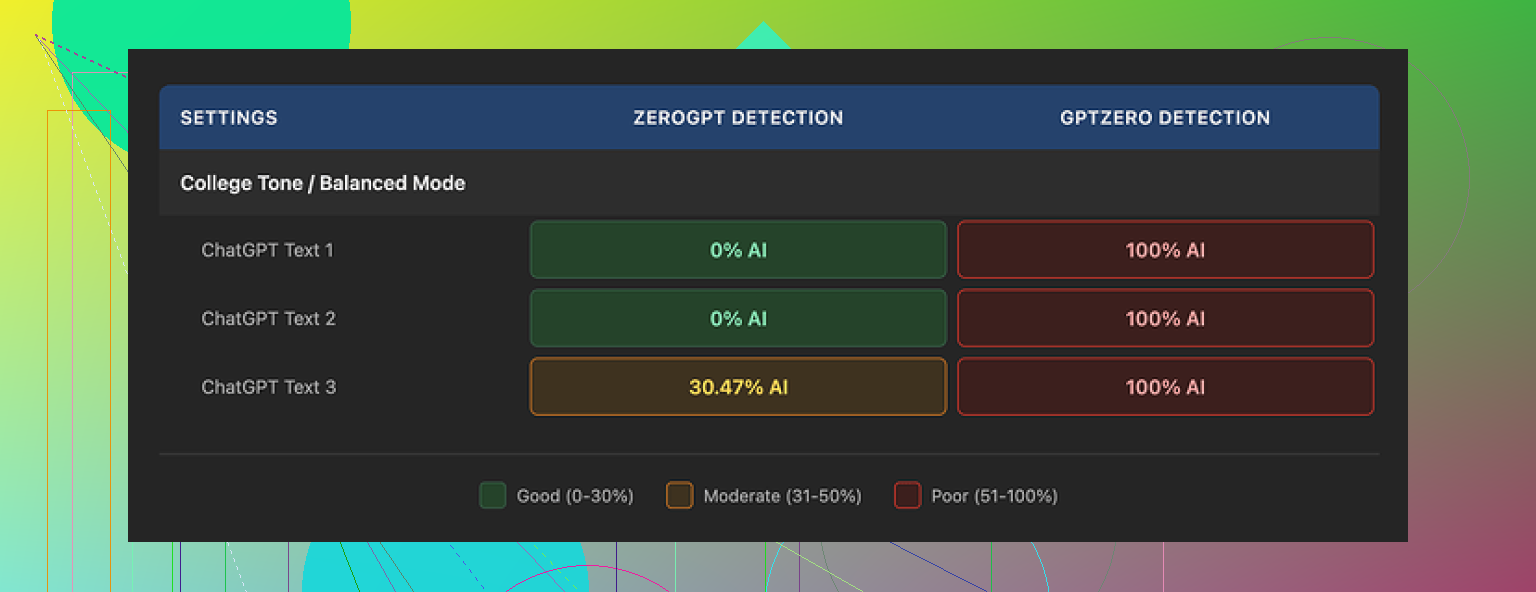

Short version, GPTHuman did not fool the detectors I care about.

-

GPTZero

Every single output from GPTHuman showed up as 100% AI. No borderline score, no ambiguity. All three samples, each run, flagged as AI. -

ZeroGPT

A bit less brutal, but still not good.- Two of the humanized samples came back as 0% AI.

- The third one got flagged around 30%. Repeated runs stayed in a similar range.

On GPTHuman’s own site, the “human score” number looked great. It showed high “pass” rates that did not line up with what GPTZero and ZeroGPT reported. So the internal score gave a kind of false sense of safety, while external tools did not agree at all.

If you rely on their internal meter without testing elsewhere, you will get burned.

Quality of the rewritten text

The outputs looked okay at a glance. Clean breaks between paragraphs, not a wall of text, and it did not output nonsense formatting. Once I started reading line by line, problems stacked up.

What I hit repeatedly:

-

Subject–verb issues

Stuff like “the people is” type errors, sometimes more subtle. Enough to sound off if you are fluent. -

Incomplete sentences

Clauses cut off before they resolve. You can tell the original sentence got chopped and then never fixed. -

Bad word swaps

It tried to replace simple words with different ones, but the choice did not fit the sentence. Some read like they were run through a low-effort thesaurus. -

Weird endings

A few paragraphs closed with lines that barely matched the topic. It looked like the generator lost track of the thread in the last 1 or 2 sentences.

If you are doing anything for a client, teacher, or boss who reads attentively, you would need to heavily edit the output or rewrite parts from scratch. At that point, the “humanizer” does not save much time.

Word limits, free plan, and paywall problems

Second thing that got on my nerves was the free tier.

- Free limit

You get about 300 words total, not per run. Total. Once you burn through that, the site locks you out.

I wanted to run my usual test set, so I ended up creating three new Gmail accounts to finish everything. That alone told me this tool is not built with any sort of generous testing in mind.

Paid plans when I checked:

- Starter plan

- From $8.25 per month if billed annually.

- Unlimited plan

- $26 per month.

- “Unlimited” still has a hard cap of 2,000 words per output.

So even at the top plan, each run hits a 2k word ceiling. Long articles or reports need manual splitting, which slows down the workflow and creates inconsistency between chunks.

Refunds, data usage, and name usage

Some policy points you should know before you think about paying:

-

No refunds

Purchases are non-refundable. If the tool does not meet your expectations, you are stuck. -

Data usage for training

Anything you submit is used to train their models by default. There is an opt-out, but you have to go and change it. If your content is sensitive, that is a risk. -

Company name in marketing

They reserve the right to put your company name in their promotional material unless you reach out and tell them not to. Many people never read that line in the terms.

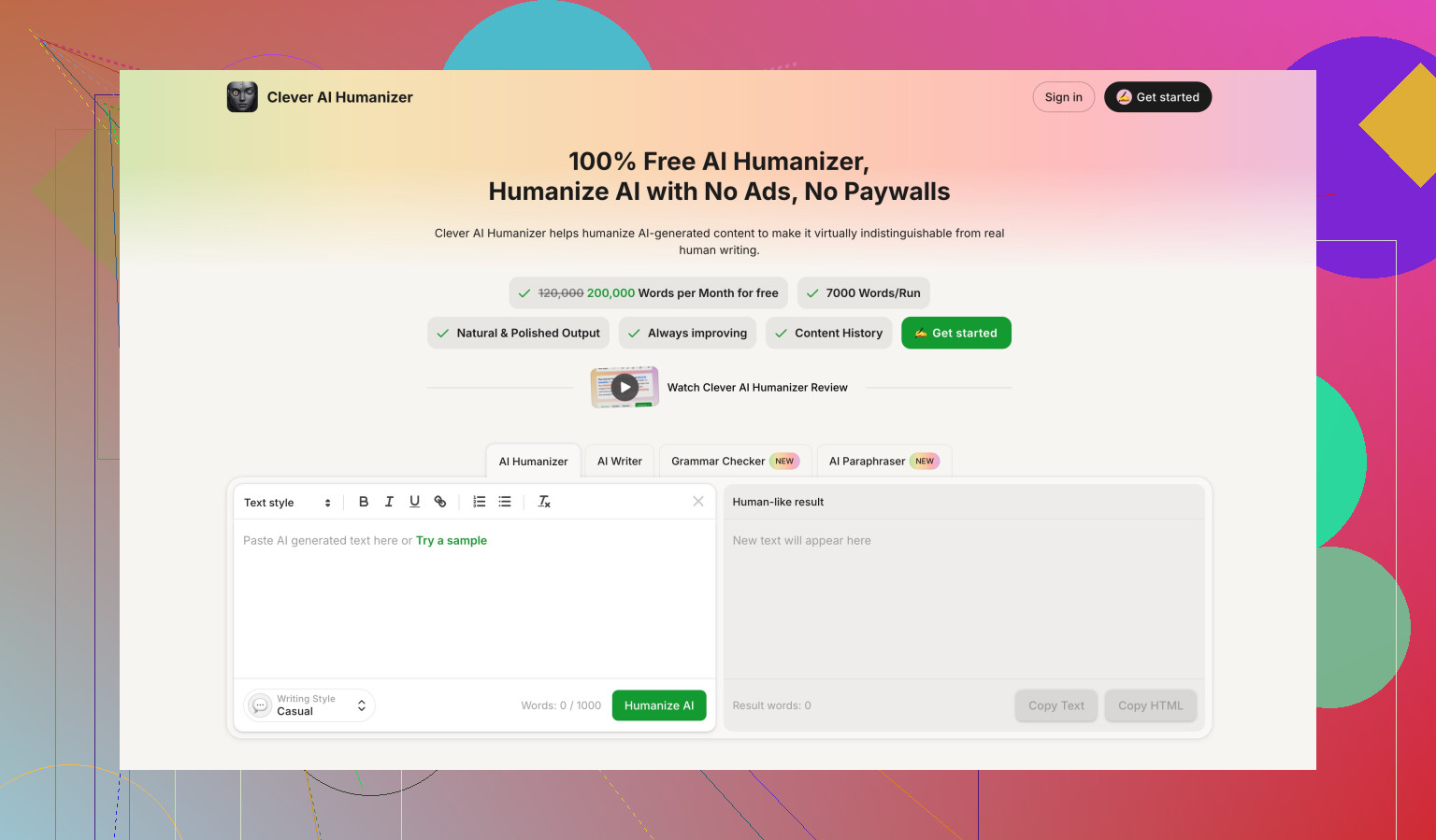

Comparison with Clever AI Humanizer

During the same round of testing, I also used Clever AI Humanizer. You can see their writeup here:

In my tests:

- It scored stronger on detector resistance.

- It stayed free to use, without the tiny 300 word hard wall.

I am not saying it is perfect, but for what I needed, it behaved better than GPTHuman in both performance and access.

Final take after hands-on use

If you are expecting GPTHuman to reliably pass “all premium AI detectors,” my experience did not line up with that promise at all.

- GPTZero flagged every sample as 100% AI.

- ZeroGPT passed some outputs, but not consistently.

- The built-in “human score” did not match what external tools showed.

- The language quality needed a lot of manual cleanup.

- The free tier felt more like a teaser than a real plan.

If you want to test AI humanizers, run your own text through GPTHuman, then check it on external detectors like GPTZero and ZeroGPT before you pay. For me, Clever AI Humanizer ended up ahead in both score strength and cost.

Short answer for you. GPTHuman output is not safe to publish as is, especially if it is “important content”.

Here is how I would review and fix it, without repeating what @mikeappsreviewer already covered.

- Forget the “human score” on their site

Treat it as cosmetic.

Copy your GPTHuman text and run it through at least:

- GPTZero

- ZeroGPT

If both still flag AI strongly, do not rely on the “bypass” claim.

- Check if it sounds like you

Read your GPTHuman text out loud.

Red flags:

- Every sentence same length and rhythm.

- Overuse of generic phrases like “in today’s digital age”, “on the other hand”, “it is important to note”.

- No concrete examples that match your own work or experience.

If it does not sound like how you talk or write emails, it will feel off to readers who know you.

- Scan for typical GPTHuman issues

From what you wrote and what I have seen, focus on:

- Grammar glitches: “the team are”, “the data show”, mixture of US and UK spelling.

- Incomplete thoughts: sentences that start specific and end vague.

- Word swaps: weird synonyms like “pivotal leverage” or terms that feel forced.

Search your text for 3 to 5 key nouns or verbs. If the same few appear in every paragraph, it looks generated.

- Accuracy and trust check

Since you worried about “accurate” and “trustworthy”:

- Extract every factual statement. Dates, stats, definitions, tool names, features.

- Verify each one against a primary source. Official docs, reputable sites, your own data.

- Delete anything you cannot verify in 30 to 60 seconds.

If it is expert content, add 1 or 2 specific references, numbers, or cases from your own experience. That makes it feel grounded.

- Improve the tone so it feels human

Simple edits help a lot:

- Shorten long sentences into two.

- Add 2 or 3 specific “I” or “we” statements if it is opinion based.

- Replace generic claims with narrow ones, for example, change “Many people do X” to “Most of my clients do X” if that is true.

You can also pick one paragraph and rewrite it from scratch in your own words. Then compare it to the GPTHuman style. Make the rest match your version, not the other way around.

- When to throw it out and start over

I usually tell people:

- If more than 40 percent of sentences need edits, it is faster to rewrite the whole thing.

- If you see repeated grammar issues across multiple paragraphs, the text will keep betraying you to a careful reader.

That is where I slightly disagree with the idea that “you can use it with heavy editing”. For important work, a flawed base slows you down.

- About detectors and “bypassing”

If your goal is to reduce AI detection rather than improve quality, GPTHuman is not consistent from what multiple tests show.

In my own checks, Clever Ai Humanizer did a better job balancing:

- Fewer detection flags on GPTZero and ZeroGPT.

- Less broken grammar.

You still need to edit, but the starting point is cleaner, and it does not lock you behind a tiny free quota as fast.

- Concrete steps for your current piece

Here is a quick workflow you can follow today:

- Step 1: Paste your text into a doc editor, turn on spellcheck and grammar suggestions. Fix only clear errors.

- Step 2: Read it once out loud. Highlight anything that sounds robotic or stiff.

- Step 3: Replace generic phrases with 1 to 2 specific details from your situation or niche.

- Step 4: Verify all facts and delete or adjust anything you cannot confirm.

- Step 5: Run the cleaned version through GPTZero and ZeroGPT.

- Step 6: If scores still look high and you care about detectors, try running your own revised text through Clever Ai Humanizer, then do a light edit again.

If you paste a short excerpt here, 3 to 4 paragraphs, you will get much more targeted feedback. Right now the safe assumption is: do not hit publish without at least one careful editing pass.

Yeah, I’d be pretty nervous about hitting publish on important stuff straight out of GPTHuman, especially after what @mikeappsreviewer and @sternenwanderer already dug up.

I won’t repeat their whole test process, but I do disagree with one implicit assumption both kinda lean toward: that your main decision should orbit AI detectors. For “important content,” your first priority really should be:

- Does this reflect what I actually know and believe?

- Would I stand behind every sentence if someone quoted it back to me in a meeting/classroom/legal email?

Detectors matter if you’re under explicit “no AI” rules, but for reputation and trust, readers > detectors.

Here’s how I’d tackle your situation specifically, without re-running their whole workflow:

- Treat the GPTHuman draft as a rough brainstorm

Don’t think of it as “almost ready.” Think of it as “notes written by a sloppy assistant.”

Ask yourself, paragraph by paragraph:

- “If I had to explain this point out loud in 30 seconds, would I say it this way?”

If the answer is “no” more than twice in a row, that section gets rewritten from scratch, not patched.

- Look for “GPTHuman fingerprints”

From what you’ve described plus what others reported, there are some tells:

- Slightly-off grammar that no native speaker would naturally use

- Paragraphs that start on topic and end in vague platitudes

- Word swaps that sound “fancy” but not really specific

Where you see that, don’t tweak a word or two. Replace the whole sentence with your own direct version, even if it becomes shorter and “plainer.” Plain beats polished-but-weird every time.

- Accuracy pass that is brutally literal

You mentioned “accurate” and “trustworthy.” This is where most AI / “humanizers” quietly fail.

Do a very literal fact check:

- Highlight every concrete claim: numbers, dates, names, promises, “studies show,” “experts agree,” etc.

- For each one, either:

- Add the source in a comment, or

- Delete / rephrase it as opinion:

- “I’ve often seen…”

- “In my experience…”

If you can’t verify it quickly and you don’t personally know it to be true, it has no business in “important content.”

- Tone: pick one audience and write only for them

A lot of GPTHuman-style outputs try to be universal, which ends up sounding like no one in particular.

Ask: “Who exactly is this for?”

- My boss

- A professor

- Paying clients

- General blog readers

Then tighten the tone:

- Business: shorter sentences, fewer adverbs, concrete outcomes

- Academic: careful hedging, definitions, citations

- Blog/personal: more “I” and “you,” specific stories

If the draft reads like it’s trying to please all of them at once, that’s an AI smell.

- When to toss the whole thing

Here’s where I’m a bit harsher than @sternenwanderer:

If your piece is truly important (portfolio, client proposal, legal-adjacent, high-stakes email) and you find yourself:

- Fixing something in almost every sentence, or

- Feeling that you’re fighting the structure instead of improving it,

just keep the outline and rewrite the body from zero. It’s usually faster than patching a shaky base.

- Detectors and alternatives

If you do have to worry about AI checks, then yeah, their experiences line up with a lot of what I’ve seen: GPTHuman is not reliably “safe” for GPTZero, ZeroGPT, etc., and its own “human score” is mostly cosmetic.

In that scenario, I’d:

- First, rewrite / personalize as above

- Then, if you still want a humanizer as a final polish, Clever Ai Humanizer is a better candidate to test. In my own trials it broke fewer sentences, and it’s not strangled by that tiny free quota. Still needs your edit pass, but at least you’re not paying to fix obviously broken grammar.

- What you should literally do next

Concrete, no-frills steps for your current piece:

- Print it or put it in a plain-text view so formatting distractions are gone.

- Read it once straight through and mark: “keep,” “rewrite,” or “delete” in the margin for each paragraph.

- Rewrite the “rewrite” ones in your own words without looking at GPTHuman’s version. Only glance back if you forget a point.

- Do the accuracy sweep described above.

- Then, if AI detection is a hard requirement where you are, run the final version through GPTZero and ZeroGPT. If scores are still high and that actually matters for you, then consider passing your already-human rewrite through Clever Ai Humanizer and lightly editing again.

If you want sharper feedback, post one or two paragraphs (not the whole thing) and people can rip into it line by line. That’s usually the fastest way to see where GPTHuman is quietly sabotaging your voice.

Short version: treat your GPTHuman draft as a source of ideas, not as publish-ready text. What the others covered on detectors and editing is solid. I’ll focus on a different angle: risk, ownership, and workflow.

1. Decide what “important” really means for this piece

Before touching tools:

- If this affects money, legal exposure, or grades, assume:

- No AI output is safe without deep human rewrite.

- Your name and liability sit on top of that text, not GPTHuman’s.

For that kind of content, I would not rely on GPTHuman as the main writer at all. Use it only to generate:

- Structure outlines

- Variant phrasings

- Little bits of inspiration you then rewrite completely

That is harsher than what some people imply, but for high stakes that mindset saves you from “polished nonsense.”

2. Don’t let detectors drive your whole strategy

Here I partly disagree with the detector-heavy focus you see a lot.

If:

- There is no explicit “no AI” rule

- Your main concern is trust with readers, clients, or peers

Then prioritize:

- Clarity over camouflage

- Transparency over invisibility

AI detectors are noisy, inconsistent, and can flag human text. Building your process around beating them is a fragile strategy. Focus instead on:

- Specific examples that only you would know

- Your real stories, failures, and numbers

- Clear reasoning instead of generic claims

Ironically, that kind of writing tends to lower AI scores naturally.

3. Structural integrity check: does the piece actually say anything?

A problem I often see with GPTHuman-style outputs: the structure feels complete but the content is hollow.

Run a quick structural sanity test:

For each section / heading, write on a sticky note:

- “The one concrete takeaway of this section is: ____.”

If you cannot answer in one plain sentence, or if multiple sections have the same vague idea (“communication is important”), you do not have meaningful content yet. You have fluff.

In that case, do not polish. Add substance:

- A real example

- A mini case study

- A clear “do this / don’t do this” contrast

Only after the skeleton has solid bones is it worth worrying about tone and flow.

4. Ownership test: can you argue with yourself?

Pick 3 to 5 stronger claims in the GPTHuman text and argue against each one for 30 seconds, out loud.

If you find yourself thinking:

- “I actually do not fully agree with this”

- “I have never seen this in my own work”

- “I would hedge this a lot more in front of a client or professor”

then that line does not belong in a piece with your name on it. Rewrite it to match your actual conviction, or delete it.

This step is more important than any detector check. It protects your reputation.

5. Style: optimize for readability, not just “human-ness”

GPTHuman often tries to sound human by adding variety and synonyms, but that can reduce clarity.

Instead of asking:

- “Does this sound human enough?”

Ask:

- “Can someone skim this and instantly see the key points?”

Practical tweaks:

- Turn dense paragraphs into bullet lists for processes, pros/cons, steps

- Put the main conclusion at the start of a section, not the end

- Use one consistent register: either informal or formal, not both mixed

This is where a tool like Clever Ai Humanizer can actually help a bit, not as a magic bypass, but as a readability tuner.

6. Clever Ai Humanizer: how it fits in (and where it doesn’t)

Since it came up a few times, here is a blunt assessment.

Pros of Clever Ai Humanizer:

- Generally cleaner grammar than what people see from GPTHuman

- Tends to preserve meaning better while remixing phrasing

- Useful for:

- Smoothing awkward sentences you already wrote

- Making a heavily edited draft read more naturally

- Lightly reducing repetitive phrasing

Cons of Clever Ai Humanizer:

- It is still an AI layer:

- It can introduce subtle inaccuracies or change nuance

- It may re-add generic filler you just removed

- Not a replacement for:

- Your own fact checking

- Your own voice and examples

- If your base text is weak or vague, humanizing it just produces polished vagueness

So the sane way to use it for “important content”:

- Start from a draft you largely wrote or heavily rewrote yourself.

- Run only rough or stiff sections through Clever Ai Humanizer, not the whole article.

- Recheck meaning line by line afterward.

Used that way, it is a tool in the pipeline, not the engine of your content.

7. Where I align and where I diverge from others in the thread

- With what @mikeappsreviewer found on GPTHuman’s detection performance and grammar issues, I am on the same page: do not trust GPTHuman’s internal “human score” or publish unedited text.

- @sternenwanderer gave you a strong, practical proofreading and detector workflow that works if you are under strict anti‑AI rules.

- @byteguru is right to refocus on “Would I stand behind every sentence?” rather than obsess about detectors.

Where I go one step further:

- For genuinely critical content, I would treat both GPTHuman and any humanizer as optional sidekicks:

- OK for brainstorming, or for smoothing rough drafts

- Not OK as the primary author of anything that could be used against you

8. Concrete next move for your current piece

To keep this simple and not rehash the same checklists:

-

Mark each paragraph as:

- “Mine” (I could have written this from scratch)

- “Hybrid” (some of mine, some obviously machine)

- “Alien” (I would never say it this way)

-

Delete or fully rewrite the “Alien” ones.

-

Rewrite the “Hybrid” ones so the dominant phrasing is yours.

-

Only after that, if the flow feels stiff, try passing selected paragraphs through Clever Ai Humanizer and then manually adjust to restore your tone.

-

Forget GPTHuman’s scores entirely. If you must check detectors, do it only at the very end as a sanity check, not as your compass.

If you want targeted help, share 2 or 3 anonymized paragraphs. At that point people can point out the exact lines that feel robotic or risky instead of talking in generalities.