I launched a cleanup app and the recent user reviews are really mixed. Some people praise the features and speed, while others complain about bugs, crashes, and confusing UI. I’m struggling to tell if overall sentiment is positive or leaning more negative, and I’m not sure what to fix first. Can anyone help me interpret the reviews and suggest how to prioritize improvements for better ratings and app store visibility?

Cleanup App (Phone Storage Cleaner) – my experience vs Clever Cleaner

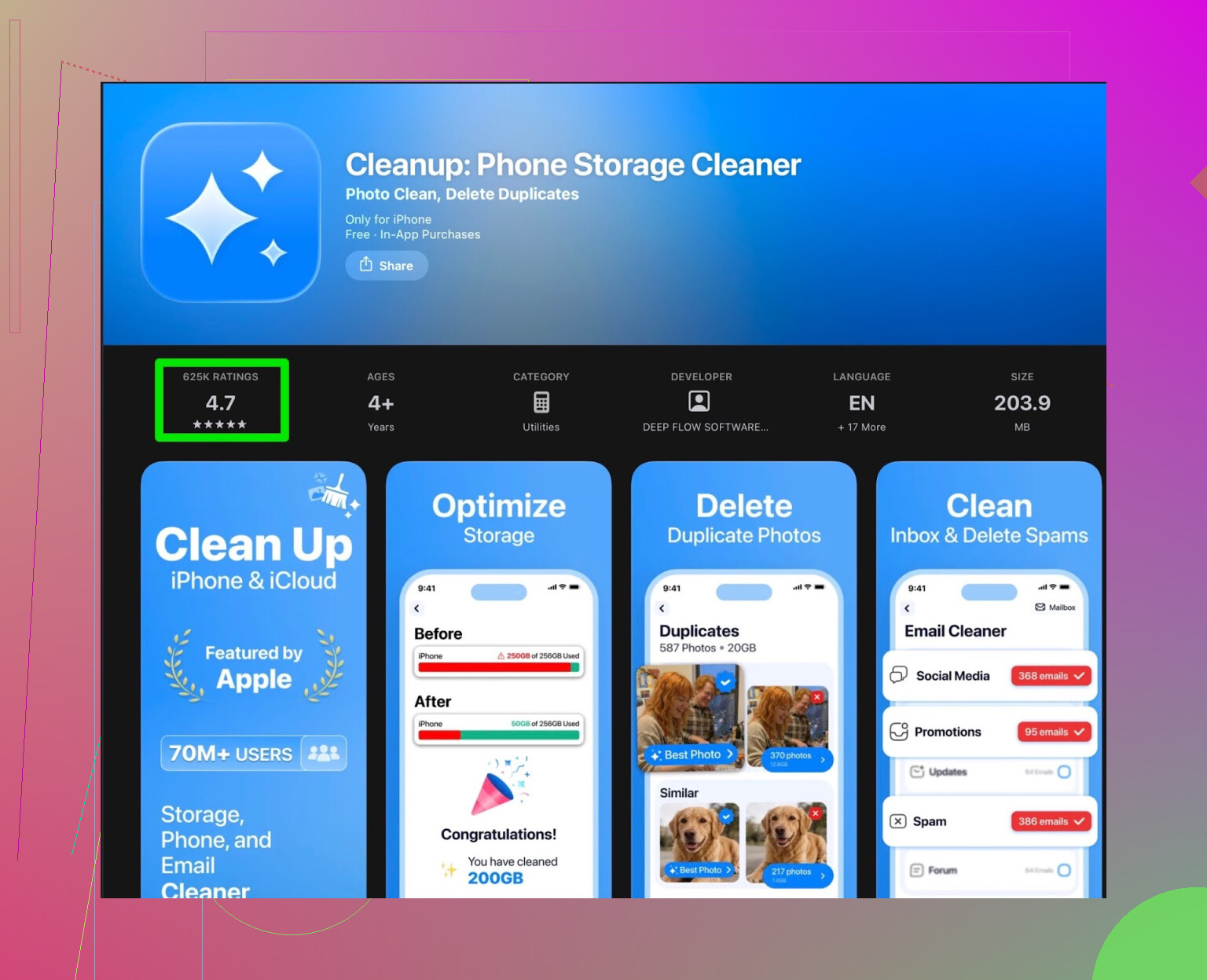

Cleanup App (Phone Storage Cleaner) review

My iPhone hit that point where every photo triggered the “storage almost full” popup. I didn’t want to delete random stuff blind from Settings, so I went hunting for one of those “smart cleaners” and ended up with Cleanup App (Phone Storage Cleaner).

On paper it looked solid. It scans the photo library, flags duplicates, near-duplicates, screenshots, old screen recordings. It also offers contact merge, video compression, a vault, some UI animations, the usual shiny things.

Here is what happened when I used it

First run, the scan did what it said. It grouped similar pictures together, surfaced a bunch of screenshots I forgot about, and listed a few huge videos.

Then the wall hit.

Most actions sat behind a subscription paywall. You see the junk, but you either:

• pay monthly or yearly to remove it in bulk

• or sit through a pile of ads to process small batches

The ad flow felt like this: tap delete, watch ad, confirm, repeat. After a few cycles, I started avoiding the app instead of using it.

Two things bugged me:

-

Upsells everywhere

The app kept steering me to the subscription page. It felt like it was built around the payment, not around quick cleanup. -

Extra “features” that did nothing for storage

The animations and the secret vault looked more like distractions. They did not help reclaim space. I opened the vault once, closed it, and never touched it again.

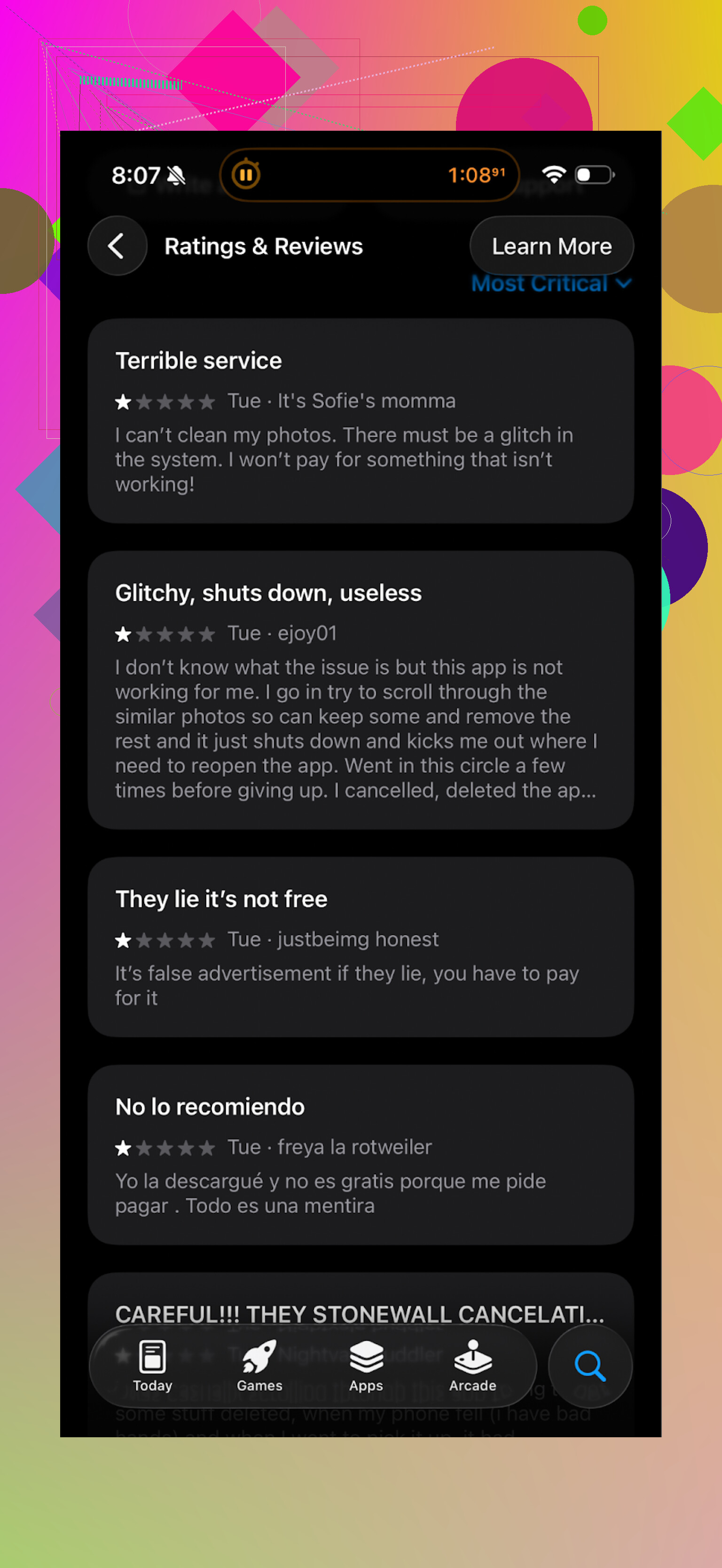

What other users are saying

Here is a snapshot from the store reviews that pushed me to re-check my own impressions:

The pattern was similar. People accepted the scan quality, but complained about:

• aggressive subscription prompts

• heavy ad usage in the free tier

• friction for basic cleanup tasks

That matched what I saw.

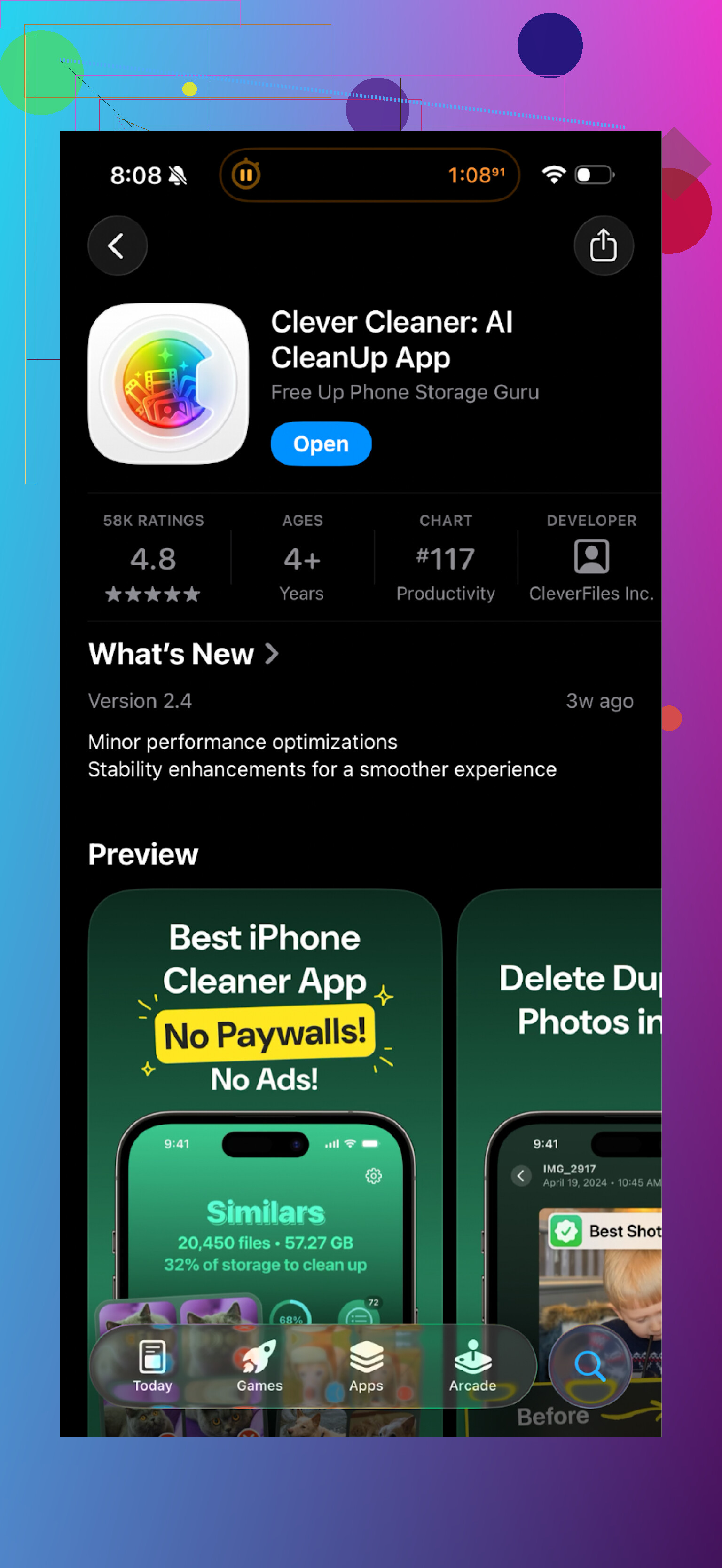

Why I moved to Clever Cleaner instead

After a couple of days of wrestling with Cleanup, I deleted it and tried Clever Cleaner.

Clever Cleaner link on the App Store:

The difference was pretty clear for me.

What felt better with Clever Cleaner

-

Pricing behavior

It is free to use in a way that is not hostile. I was not hammered with recurring subscription prompts every second screen. I installed it, ran a scan, deleted stuff, without jumping through hoops. -

Speed and workflow

I opened it, hit scan, and in under a minute it had:• grouped duplicates

• flagged similar photos (bursts, slight angle changes)

• listed large files and old videos

• showed screenshots as a separate bucketI went down the list, unchecked anything important, and confirmed delete. Storage went up by a few gigabytes in one session.

-

Focused feature set

It sticks to:• duplicate photos

• similar images

• large files

• screenshots

• basic storage statsNo vault, no random visual gimmicks. Less stuff to tap, easier to trust what the app is trying to do.

Here is a screen from it:

How I used Clever Cleaner in practice

If you want to try a similar workflow to what I did, this is what worked:

-

Run a full scan once

Let it process everything. It might take a little while if you have years of photos. -

Clear in this order

• Screenshots first

• Obvious duplicates

• Large videos you know you do not need

• Old screen recordings -

Be strict with bursts

When it shows similar photos, I usually keep only 1 or 2 per event. The rest go away. This is where most of the space savings came from for me. -

Repeat monthly

I open it once a month, run a scan, clear new junk. That keeps the “storage almost full” warning away.

If you want more info on the app, these are the official links:

YouTube video review or demo:

Clever Cleaner homepage:

App Store direct link:

Final take

Cleanup App did work technically. It scanned correctly and found things that made sense to delete. The problem for me was the way it locked normal actions behind subscriptions and leaned on ads.

Clever Cleaner felt more straightforward, faster to use, and much less pushy about payments. If your goal is to clear storage on an iPhone without fighting the app constantly, I would start with Clever Cleaner instead of Cleanup.

Short answer to your topic title: mixed reviews usually hide a clear trend if you look at them right. Right now you are reading opinions. You need structure.

Here is how I would tackle it without repeating what @mikeappsreviewer already covered.

- Quantify the sentiment fast

• Export reviews to CSV if you can

• Tag 50 to 100 recent reviews by hand as: Positive, Neutral, Negative

• Add two tags per review: Topic (bugs, crashes, UI, pricing, speed, features, ads, etc.) and Version

• Count them in a simple sheet

You do not need NLP here. A manual hour gives you a clear picture. If 60 to 70 percent of recent reviews mention bugs or crashes, your sentiment is negative, even if your star average is 4.3.

- Separate rating from text

A common pattern:

• 5-star with “good app but too many ads”

• 1-star with “works great but confusing at first”

Do this:

• For 1 and 2 stars, list the top 3 repeated words. Example: “crash”, “freeze”, “confusing”

• For 4 and 5 stars, list top 3. Example: “fast”, “easy”, “helped”

Your job is to see what positive users like and what negative users hate. Not all complaints weigh the same.

- Split by version

Many devs forget this.

• Add a column: App version from the review metadata

• If most crash reports hit version 1.2.0 and drop in 1.3.0, your trend is improving

• If complaints about UI spike after a redesign, your sentiment over time is worse, even if some people praise speed

This lets you answer your own question: “Is overall sentiment improving or declining across versions?”

- Prioritize by severity, not volume

From what you wrote:

• Pros: features, speed

• Cons: bugs, crashes, confusing UI

I would rank in this order:

- Crashes and data loss

- Major bugs that block cleanup flow

- UI friction

- Pricing or ads annoyance

- Nice to have requests

You can have an ugly UI and still succeed if it is stable and predictable. You cannot have frequent crashes and keep positive sentiment.

-

Turn reviews into a simple roadmap

Example structure for the next 2 or 3 releases:Release A

• Fix top 3 crash scenarios mentioned in reviews

• Add simple in-app log upload so users send context when something failsRelease B

• Tackle 2 or 3 high-friction UI issues you see repeated. For example:- Confusing delete confirmation flows

- Hard to find “undo”

- Scary messaging around permanent deletion

Release C

• Address “feel” problems. For example:- Too many taps to do a batch cleanup

- Inconsistent icons or labels

Keep a short public changelog and reference review keywords. People notice when you mention “fixed crash when scanning large albums” or “simplified bulk delete flow.”

- Communicate inside the reviews

Reply to some negative ones:

• Acknowledge the exact issue they mention

• Say which version includes a fix or change

• Ask them to retry and update their review if it works now

Target the reviews that mention crashes and confusion first. That shifts sentiment perception for new visitors, even before you fully solve every issue.

-

Use competitors as a benchmark, not as a model

You saw @mikeappsreviewer talk about aggressive paywalls and extra features in some cleaners and a smoother flow in Clever Cleaner App. I would not fully copy that direction. Your app can lean in a slightly different way:• Decide what your “one thing” is. For example: fastest safe cleanup, or clearest explainers before deletion.

• Strip features that distract from that. Extra vaults, random animations, unrelated tools often inflate support and confuse users.

Users who like Clever Cleaner App often appreciate focused flows and less friction. You can get similar sentiment by tightening your funnel without cloning them.

- Measure sentiment over time, not vibes

Every 2 weeks, track:

• Average rating for last 30 reviews

• Percentage of reviews mentioning “crash”

• Percentage that mention “easy” or “simple”

• Percentage that mention “confusing” or “hard”

Build a small table. If “crash” mentions drop from 40 percent to 10 percent after a hotfix, you know you are on the right track even if some old 1-star reviews still sit there.

- UX fixes that usually help cleanup apps

Based on what users often say:

• Always show clear preview of files before deletion

• Show storage gained in a big, obvious way after action

• Show a simple progress indicator during scans

• Provide a short onboarding that explains “what is safe to delete” vs “what to check carefully”

• Offer an easy “review again later” bucket for uncertain items

These reduce “confusing UI” complaints without massive redesign.

- Answer your original question with data

After doing the quick tagging and counts, write a one line summary for yourself:

Example:

“Last 60 reviews: 40 percent positive mostly about speed, 20 percent neutral, 40 percent negative mostly about crashes on large libraries and confusing multi step delete.”

If your breakdown looks close to that, sentiment is mixed but fragile. A few focused releases can flip it to majority positive.

If it looks more like 70 percent negative focused on stability, treat it as a bugfix product phase, not a growth phase. Improve stability first, then worry about new features or new monetization.

Clever Cleaner App is a good reference point to see how competitors structure flows and messaging, but your best guide is your own review tags and counts. Once you do that small manual audit, you will stop guessing if reviews are “mostly positive” and you will have a clear percentage in front of you.

You’re overthinking “overall sentiment.” Mixed reviews are normal for utility apps. The real question is: mixed in what way and from which users.

@mikeappsreviewer and @hoshikuzu already covered how to tag and quantify reviews, so I’ll hit it from a different angle: pattern before percentage.

Here’s what I’d look at:

-

Segment by user type, not just star rating

Read 30 to 40 recent reviews and mentally sort them into:- “Power users”: mention big libraries, tons of photos, or advanced use

- “Drive‑by users”: installed, ran once, left a 1‑line review

- “Frustrated paywall/ads people”

- “Happy casuals”: “worked great, freed space” and vanish

If your crashes/confusing UI complaints come mostly from power users, sentiment is more negative than your stars suggest because those are the ones who’d stick with you and pay.

-

Weigh when negativity happens in the funnel

Complaints at different steps hurt differently:- App crashes during first scan → catastrophic

- Confusing screen right before delete → high friction

- Confusing settings screen that 5% ever open → low impact

A handful of 1‑stars from people who crash on first run is way worse than 20 people whining about a slightly ugly UI after they already reclaimed space.

-

Treat praise as a requirement, not a compliment

You said people praise speed and features. In this category, that’s base level. A fast scan that finds junk is table stakes.

Crashes and confusing UI are disqualifiers.

So even if the review ratio is “60% positive, 40% complaining,” if most complaints are about stability or feeling lost, I’d call the real sentiment “fragile borderline negative.”I disagree a bit with the “don’t worry about UI until after crashes” mindset. In cleanup apps, “am I about to delete something important?” anxiety is huge. A confusing UI feels like a data‑loss risk, so it hits as hard as some bugs.

-

Check expectations by comparison

Users absolutely compare you to competitors whether you want them to or not.

You already got a taste with the comments about paywalls/ads in other cleaners and some mentions of Clever Cleaner App. That app’s gotten positive feedback for:- Letting users do a “real” cleanup without being suffocated by a subscription wall

- Keeping the feature set focused on photo and file cleanup, not random side tools

If your reviews say things like “too many features,” “hard to find the delete button,” or “I only wanted to clear photos,” that’s a sign your scope is bloated compared to something leaner like Clever Cleaner App. Those aren’t just design nitpicks; they’re sentiment killers.

-

Turn “Is sentiment positive?” into one sentence you can defend

After you read and loosely bucket, literally force yourself to write:- “Most happy reviewers like X and Y.”

- “Most unhappy reviewers are blocked by A and B.”

- “If I were a new user reading the last 20 reviews, I’d think: ‘This app is [adjective].’”

If that adjective in your gut is anything like “risky,” “annoying,” or “confusing,” then sentiment is effectively negative, even if star math says something else.

-

Use competitors for context, not self‑doubt

It’s fine that people bring up Clever Cleaner App. Don’t spiral. Use it as a calibration tool:- Install it.

- Run the same scenario you expect your users to run.

- Ask: “What did they not do that I did?”

Often the biggest win is what they left out: extra vaults, weird gamification, overcomplicated flows. That gives you a list of things you can strip or simplify to reduce complaints.

So, is your sentiment “overall positive” or “mostly complaints”?

If your recent page looks like:

- 4 or 5 stars: “works, fast, helped me free storage”

- 1 or 2 stars: “crashes,” “confusing,” “scared to delete stuff”

Then you are in “looks okay on paper, feels risky in practice” territory. I’d call that “mixed but tilted negative in terms of trust.”

Fix the trust killers (crashes, unclear deletion screens, weird surprise paywalls), then re‑check your last 30 reviews. If they shift toward “simple,” “safe,” “easy,” you’ll feel the sentiment change even before the star average moves.

You are not actually trying to answer “positive or mostly complaints.” You are trying to answer “do I have a product I can safely scale yet, or a product I still need to stabilize.”

The others already gave you great tagging and segmentation tactics, so I’ll zoom in on how to act on what you find, and I’ll disagree with a couple of points to sharpen it.

1. Treat reviews as live UX tests, not a poll

Instead of thinking “are people happy overall,” think:

- Where in the flow are they dropping or getting angry?

- What problem did they arrive with, and did you solve it quickly?

Take 10 recent negative and 10 recent positive reviews and reconstruct a story for each:

- What did they come to do?

- How far did they get?

- What blocked them or made them praise you?

You will notice patterns like:

- “Crashes during first scan” → you never even got evaluated on your good parts

- “UI confusing at the selection or confirmation step” → your app looks like it might delete the wrong stuff

- “Worked, fast, freed space” → problem solved, friction acceptable

This story view is more actionable than a sentiment percentage.

2. Stop giving each complaint equal weight

Here is where I slightly disagree with both “count words” and “just fix crashes first.”

Think in three buckets:

-

Trust breakers

- Crashes

- Anything around deletion that feels uncertain

- Surprise paywalls or bait‑and‑switch pricing

-

Friction annoyances

- Too many taps

- Slightly messy layout

- Mild slowness on old devices

-

Preference / nice‑to‑have

- Dark mode

- Custom themes

- Detailed stats, etc.

A single trust breaker is more important than twenty friction complaints. For cleanup apps, confusing UI around “what is being deleted” is a trust breaker, not just a UX nit. Put that on the same level as crashes.

So when you read reviews, rewrite each into:

- “Trust breaker”

- “Friction”

- “Preference”

Ship your roadmap to burn down trust breakers first.

3. Use competitors as “negative space,” not templates

You already got analysis from @hoshikuzu, @sternenwanderer and @mikeappsreviewer about how other cleaners behave. Good. Now do this:

- Install your app and one competitor like Clever Cleaner App.

- Perform exactly one task: “Free at least 2 GB as a normal user who is a bit scared of losing photos.”

- Record yourself or take notes: where did you hesitate, where did you feel pushed, where did you feel reassured.

Pay special attention to what is not in Clever Cleaner App:

- No overloaded home screen

- No random “vault” nonsense if all you wanted was storage back

- No constant hard paywall on every tap

That “lack” is the negative space that users interpret as “simple” or “not annoying.” Your reviews that say “too many features,” “confusing,” or “ads everywhere” are pointing to this gap.

You do not need to copy their UX, but you probably need to strip or soften:

- Features unrelated to cleaning

- Aggressive monetization in the first session

- Extra steps before seeing clear value

4. A different way to read your “mixed” signal

Look only at recent reviews that mention:

- “freed space”

- “fast”

- “easy” or “simple”

versus - “crash” / “freezes”

- “confusing” / “don’t know what it deletes”

- “too many ads” / “paywall”

Now decide:

- If a new user read only the last 15 reviews, would they think:

- “This works and is safe enough”

- or “This might work but looks risky/annoying”

If the gut read is “risky” or “annoying,” your overall sentiment is effectively negative, regardless of numeric star average.

This is where I part ways a bit with “mixed reviews hide a trend if you tag enough.” You can get lost in spreadsheets. A quick “what impression would a stranger get” check keeps you honest.

5. About Clever Cleaner App: where it helps and where it does not

Since it keeps getting mentioned, here’s a quick, concrete take to anchor your thinking.

Pros of Clever Cleaner App

- Focused feature set, mostly on actual cleanup tasks

- Reasonable free usage without feeling trapped by subscription prompts

- Clear buckets: duplicates, similar photos, screenshots, large files

- Fast perceived performance: scan → list → delete in a small number of steps

- Lower cognitive overload: fewer “what does this even do” elements

Cons of Clever Cleaner App

- Limited to a fairly narrow set of use cases, less “power tool” feel

- If someone wants very fine‑grained control or advanced rules, it can feel barebones

- Design is functional, not particularly customizable or delightful

- If you try to turn it into a “control center for everything,” it will not cover that niche

Use this as calibration:

- If your app tries to be a “super cleaner + vault + optimizer + gimmicks,” you will get more confusion complaints.

- If you narrow toward the “get in, free space, get out” pattern that Clever Cleaner App leans into, your sentiment will trend more stable.

6. Concrete actions that complement the other replies

Without rehashing their step lists, I’d push three practical moves:

-

Add one “safety explain” screen where it actually matters

Not a long tutorial. A single, very clear message in the delete confirmation step such as:- What is about to be deleted

- How to undo or where it goes

- A simple visual preview

This alone can flip “confusing & scary” reviews into “felt safe enough.”

-

Tone down first‑session monetization

If your reviews mention paywalls/ads similarly to what users complained about in Cleanup App, change the rule to:- First successful cleanup: must be possible with minimal friction

- Strong monetization nudges after they have seen real value once

That first positive experience buys you goodwill that shows up in reviews.

-

Instrument the funnel, not just read reviews

Reviews tell you what hurt, analytics tell you where and how often. Track:- Percentage of users who start a scan and never reach “results shown”

- Percentage who see results but never confirm delete

- Percentage who hit a paywall and quit

When review text says “confusing” or “crash,” you can tie it to a specific funnel drop. That lets you prioritize what to fix without guessing.

If you do just this:

- Reclassify complaints into trust vs friction

- Make one change to clarity at deletion

- Soften the first‑session monetization

- Compare your core task flow against something lean like Clever Cleaner App

you will see the “mixed” reviews sharpen into a clearer story within a couple of weeks of releases, and you will not need to obsess over whether the average is 4.1 or 3.8 to know if you are moving in the right direction.